Mixture-of-Experts, explained - plus OpenAI's new models that cost 4× more but work 10× harder

And top remote AI jobs from SGF-ACCORD AI, Talrn and more.

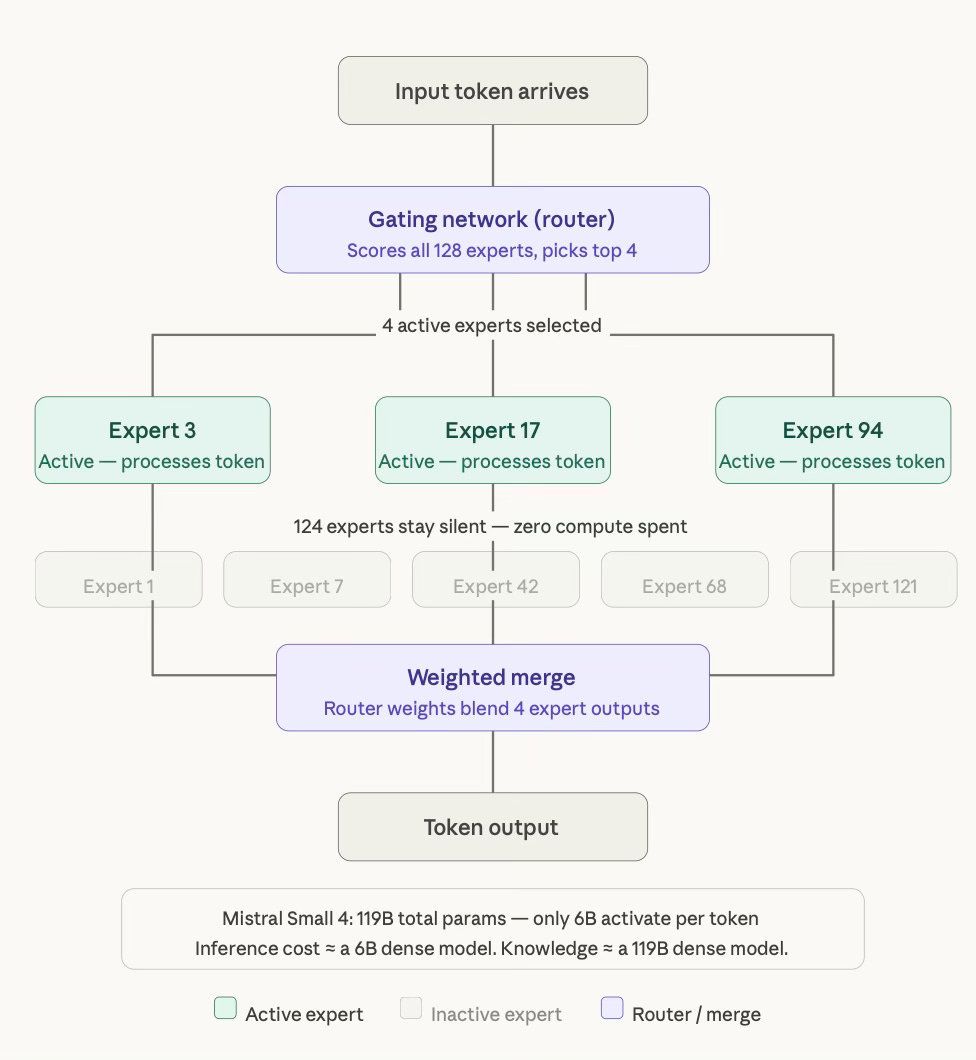

Running a 100B+ parameter model sounds prohibitively expensive — but what if only 6B of those parameters actually fired for any given input? That's the core idea behind Mixture-of-Experts (MoE) Architecture: a model design where a large pool of specialized sub-networks exists, but only a small subset activates per token — giving you the knowledge of a massive model at the inference cost of a tiny one. Mistral just shipped Small 4, a 119B-parameter MoE that activates only 6B parameters per token, unifies four previously separate models into one, and runs 40% faster than its predecessor — all under Apache 2.0. Let's understand the concept a little better first.

1. AI CONCEPT IN 3 MINUTES

Mixture-of-Experts (MoE) Architecture

A Mixture-of-Experts model is like a hospital with 128 specialist doctors — but for any given patient, only 4 doctors are actually called in. The model is huge in total knowledge, but tiny in how much it uses at once.

How It Works

Experts Are Sub-Networks: Inside a MoE model, the standard dense feed-forward layer is replaced by a bank of many smaller “expert” networks — in Mistral Small 4’s case, 128 of them. Each expert specializes in different types of knowledge through training.

A Router Picks the Best Experts: For each incoming token, a lightweight gating network (the “router”) scores all 128 experts and selects the top 4. Only those 4 experts process the token. The rest don’t activate at all — no compute spent, no memory accessed.

Only Active Parameters Cost Money: A dense 119B model costs like a 119B model at inference. A MoE 119B model that activates 6B parameters per token costs like a 6B model at inference — while drawing on the full knowledge encoded across all 119B parameters during training. That’s the MoE economic trick.

Configurable Reasoning on Top: Mistral Small 4 adds a

reasoning_efforttoggle: set it tononefor fast, direct responses; set it tohighfor step-by-step chain-of-thought reasoning. One deployed model, one API — no routing between a fast model and a separate reasoning model required.

Example

Dense model (old approach):

Model: 70B parameters

Every token: all 70B parameters activate

Cost per request: expensive

One model per use case: you need separate fast + reasoning modelsMoE model (Mistral Small 4):

Model: 119B total parameters, 128 experts

Every token: router picks 4 experts → 6B parameters activate

Inference cost: ~6B dense model equivalent

One deployment handles:

→ Fast chat (reasoning_effort="none")

→ Deep reasoning (reasoning_effort="high")

→ Multimodal (images + text)

→ Agentic coding (Devstral-level)MoE lets you train a model with the knowledge of 119B parameters but pay for a 6B model at inference — it’s the architecture that makes “one model to rule them all” economically viable.

2. TOP 3 DEVELOPMENTS

Mistral Launches Forge + Small 4: One 119B MoE Model That Does the Job of Four

Mistral AI unveiled Mistral Forge at NVIDIA GTC — a platform that lets enterprises build custom AI models trained on their own proprietary data with internal training recipes and forward-deployed Mistral scientists, eliminating third-party provider lock-in for high-compliance sectors like defense, healthcare, and finance. The centerpiece of the launch is Mistral Small 4, a textbook MoE model as explained in today's concept: 119B total parameters, 128 experts with 4 active per token, a 256K context window, native multimodality, and a configurable reasoning_effort parameter that replaces the need to deploy separate fast and reasoning models entirely. Small 4 consolidates the capabilities of Mistral Small (instruct), Magistral (reasoning), Pixtral (multimodal), and Devstral (agentic coding) into a single deployment target — and delivers 40% faster latency and 3× more throughput than Mistral Small 3. CEO Arthur Mensch says Mistral is on track to surpass $1B in ARR this year on the back of its enterprise focus.

Details & Specifications:

Training/Architecture:Sparse MoE: 128 experts, 4 active per token; 119B total, 6B active parameters (8B including embedding layers); configurable

reasoning_effortper request:none(fast) orhigh(chain-of-thought); native multimodal: text + image input, text output; Leanstral integration for formal code verification

Models/Checkpoints:Mistral Small 4 (119B); NVFP4 quantized checkpoint on Hugging Face; Mistral Forge (enterprise fine-tuning platform); NVIDIA Nemotron Coalition co-development partnership announced at GTC simultaneously

Performance:AA LCR: 0.72 with 1.6K characters vs Qwen's 5.8–6.1K for comparable results; LiveCodeBench: outperforms GPT-OSS 120B with 20% less output; 40% faster completion; 3× more requests/sec vs Small 3; minimum hardware: 4× H100, 2× H200, or 1× DGX B200

Pricing:Apache 2.0 — free and open-weight; available on Mistral API (pay-per-token), Hugging Face (self-host), NVIDIA NIM (production container), AI Studio (free prototyping), build.nvidia.com

Integration/Availability:Available now via vLLM, llama.cpp, SGLang, and Transformers; Mistral API, AI Studio, Hugging Face, NVIDIA NIM, build.nvidia.com; Mistral Forge enterprise access via mistral.ai; NVFP4 checkpoint available on Hugging Face

Anthropic Launches Dispatch: Control Your Desktop AI Agent from Your Phone

Anthropic launched Dispatch as a research preview inside Claude Cowork — software that allows users to control a Mac-based Cowork session from their iPhone via the Claude mobile app, using a QR code to pair the desktop session with the phone. The pitch is simple: fire off a task from your phone, walk away, and come back to finished work — Claude runs on your computer with full access to local files, connectors, and plugins, while you steer from anywhere via a single persistent conversation thread that stays in sync across devices. It mirrors the Remote Control feature launched for Claude Code three weeks earlier, extending the same mobile-session bridge to non-developer workflows. Early testing found Dispatch works reliably with Connectors but is slow and inconsistent, succeeding roughly half the time on complex tasks — consistent with Anthropic's "research preview" framing and promise of rapid updates.

Details & Specifications:

Training/Architecture:Local execution model: Claude runs on the user's Mac, local MCP servers and tools stay on device; only chat messages and tool results flow through Anthropic's encrypted API bridge; no inbound ports opened — local machine polls the API for instructions; QR-pairing system connects desktop and mobile sessions; single persistent conversation thread per session

Models/Checkpoints:Claude Opus 4.6 / Sonnet 4.6 as the reasoning layer; Cowork Connectors supported (email, Notion, local file OCR); sandboxed VM for security; no multiple simultaneous threads in current preview

Performance:MacStories early testing: ~50% success rate on complex multi-step tasks; email summarization and Notion data retrieval worked well; sharing outputs to other apps unreliable; no task-complete notifications currently; Claude Code annualized run rate: $2.5B as of February 2026

Pricing: Dispatch available now for Max subscribers ($100–$200/month); Pro access ($20/month) within days; Team and Enterprise not included in research preview

Integration/Availability:Requires updating Claude Mac app; Dispatch option appears in Cowork settings; Mac must be awake with Claude app open; controllable from iPhone via Claude mobile app; broader device support and reliability updates promised in coming weeks

OpenAI Ships GPT-5.4 Mini and Nano: Near-Flagship Intelligence, But Up to 4× Pricier Than Before

OpenAI launched GPT-5.4 mini and nano — smaller, faster variants of GPT-5.4 optimized for coding, tool use, multimodal reasoning, and high-volume API and sub-agent workloads. The models close most of the performance gap with the flagship at a fraction of the cost: GPT-5.4 mini scores 54.4% on SWE-Bench Pro versus the flagship's 57.7%, and 72.1% on OSWorld-Verified versus 75.0% — while running more than 2× faster and consuming only 30% of GPT-5.4's Codex quota. The catch: GPT-5.4 mini costs 3× more per million input tokens than GPT-5 mini, and GPT-5.4 nano is up to 4× pricier than its predecessor — pricing OpenAI justifies through the significant performance jump that brings compact models near flagship level. OpenAI is explicitly positioning these as sub-agent models — letting GPT-5.4 handle planning while mini and nano execute delegated parallel subtasks.

Details & Specifications:

Training/Architecture:Sub-agent architecture: GPT-5.4 plans and coordinates; mini/nano execute parallel subtasks (codebase search, file scanning, doc processing); both support text + image input, structured outputs, function calls, and tool use; 400K token context, 128K max output

Models/Checkpoints:GPT-5.4 mini: SWE-Bench Pro 54.4%, OSWorld-V 72.1%, 2× faster than GPT-5 mini; GPT-5.4 nano: beats GPT-5 mini on SWE-Bench Pro and Toolathlon; both available in API, Codex, and ChatGPT

Performance:Mini: 54.4% SWE-Bench Pro (vs 45.7% GPT-5 mini); Nano: beats GPT-5 mini on SWE-Bench Pro and Toolathlon; mini burns 30% of GPT-5.4's Codex quota = ~3× throughput

Pricing: Mini: $0.75/M input, $4.50/M output (vs GPT-5 mini's $0.25/$2.00); Nano: $0.20/M input, $1.25/M output; Batch API: 50% discount on both; prompt caching: 90% off cached context; GPT-5.4 nano API-only; mini in ChatGPT + Codex + API

Integration/Availability:GPT-5.4 mini available now in ChatGPT, Codex, and API; GPT-5.4 nano API-only; both support structured outputs, function calls, tool use; 10% surcharge for regional data residency endpoints

3. AI CAREER OPPORTUNITIES

1. Manager – AI Engineering

📍 Deployd.io | Remote | Full-Time

🔗 Apply Here 2. AI Software Engineer

📍 SGF-ACCORD AI | Remote | Full-Time

🔗 Apply Here 3. Gen AI/ML

📍 RSK IT Solutions | Remote | Full-Time

🔗 Apply Here4. Remote Machine Learning Internship

📍 Talrn | Remote | Full-Time

🔗 Apply Here We track real AI shifts - with facts, without hype

• The most important daily AI advancements, summarized & with tech specs

• One critical AI concept explained in simple terms

• Curated AI jobs and projects, all remote-friendly

To make the most of AI, subscribe to the newsletter and share it with other AI professionals.

Stay connected:

Deep Tech Stars App | WhatsApp: AI Jobs | WhatsApp: AI Discussions | LinkedIn | Instagram